6 Creative Ways to Repurpose Content with Instagram’s Updates

August 20, 2025 at 8:25 pm

Will Removing Blog Comments Impact Your SEO Rankings?

August 20, 2025 at 8:54 pmIn search engine optimization, the main goal is to get as many pages in your website indexed and crawled by search engines like Google to rank higher.

The common misconception is that doing so could result in better SEO rankings but that may not always be the case. Oftentimes, it is necessary to deliberately prevent search engines from indexing certain pages from your website to boost SEO.

So now you may be wondering, how do I stop Google from indexing my web page?

As tempting as it is for marketers to index as many website pages as possible in an attempt to boost their search engine rankings, this may not always prove effective. An example of keeping certain pages out of your website’s search engine index would be thank-you pages – where visitors land after filling out a form on your landing page. If thank-you pages are indexed in search, it is possible for people to find or give away your gated content for free. Ultimately, it will affect you as you may lose leads.

There are 2 ways to ask Google not to index your pages in search:

#1 Method: Via a Robots.txt File

The first way to remove a page from search engine results is by adding a robots.txt file to your site. The advantage of using this method is that you can get more control over what you are allowing bots to index. This means you can proactively keep unwanted content out of the search results.

Within a robots.txt file, you can specify whether you’d like to block bots from a single page, a whole directory, or even just a single image or file. There’s also an option to prevent your site from being crawled but still enabling AdSense ads to work. That being said, of the two options available to you, this one requires the most technical skill. To learn about how to create a robots.txt file, refer to this article from Google Webmaster Tools.

But if you don’t require all the control of a robots.txt file and just want to get straight to the point, you can consider using the second method.

Method #2: Via a “noindex” Meta Tag

Using a meta tag to prevent a page from appearing in search engine results is both effective and easy. It requires only a tiny bit of technical know-how, and it’s actually just a copy/paste job if you’re using the right content management system.

Here’s how to do it.

First, copy this tag:

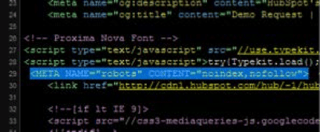

<META NAME=”robots” CONTENT=”noindex”>

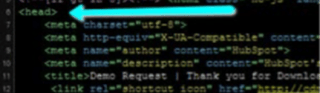

Next, paste the full tag into a new line within the <head> section of your page’s HTML (known as the page’s header).

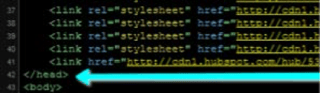

The following screenshots will walk you through it.

This signifies the beginning of your header:

And here is the meta tag within the header:

And this signifies the end of the header:

That’s it! This tag tells a search engine to turn around and go away.

It’s that simple! Remember, indexing more website pages may do you more harm than good. Start by identifying if you have been unknowingly giving gated content away without capturing visitor information.